Nearly There!

Last month I ended with:

If all goes according to plan, next month’s Dev Log will be about how smoothly everything went 🙂

… smoothly is not exactly how I’d describe the last few weeks.

We’ve decided to push the launch back another two weeks to June 15th. We don’t take this decision lightly, but since the three existing Haven sites are still functional, we feel more comfortable taking our time to polish things off a bit more rather than rush to meet an imaginary deadline.

The primary cause for the delays are:

- The Admin site and it’s functions to process uploaded assets was more challenging to implement than expected. This is 90% complete now, but it has delayed the rest of my tasks.

- We’re reworking the Pawn Shop scene a bit. I’d like this to be a stunning representation of what’s possible with our assets, but it’s proving a challenge to balance the overall artwork with individual asset displays.

- We’ve got over 700 existing assets to port over to the new platform, which has new technical requirements since we’re adding more customizable download options. Doing anything 700 times is no small task, and we have to be careful to handle the edge cases of unusual assets.

I believe June 15th is a reasonable target for this, as we have the bulk of the work (that I’m aware of) complete already. But whether I call it “finished” by then or not, you can always see our latest progress on the Beta site.

Delays aside, here’s the progress we made this month:

Home Page

I’m aware that most regular users would rather have the asset library as their home page for quicker access, but I think it’s more valuable to have a kind of “landing page” for new users, so we can give them an idea of what Poly Haven really is (other than just an asset library).

I’m aware that most regular users would rather have the asset library as their home page for quicker access, but I think it’s more valuable to have a kind of “landing page” for new users, so we can give them an idea of what Poly Haven really is (other than just an asset library).

A big part of implementing this was wrangling with the Patreon API and their webhooks in order to get lists of our patrons to display on the home page and in the footer. Working with external APIs reliably and securely is always a challenge, and their lack of support and documentation has always been a nightmare to deal with.

Additionally, in the spirit of transparency, we’re now also showing the portion of our funding that is from corporate sponsorships outside of Patreon. Next week I’ll be working on a dedicated and automated finance report page to show more details of earnings, spending and savings.

Bulk Asset Processing

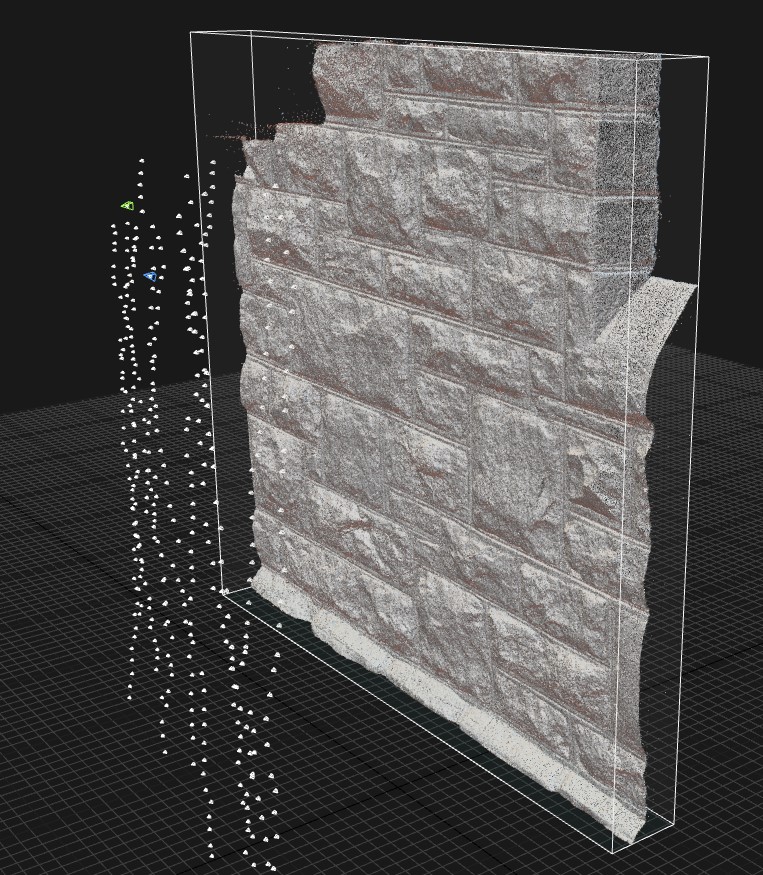

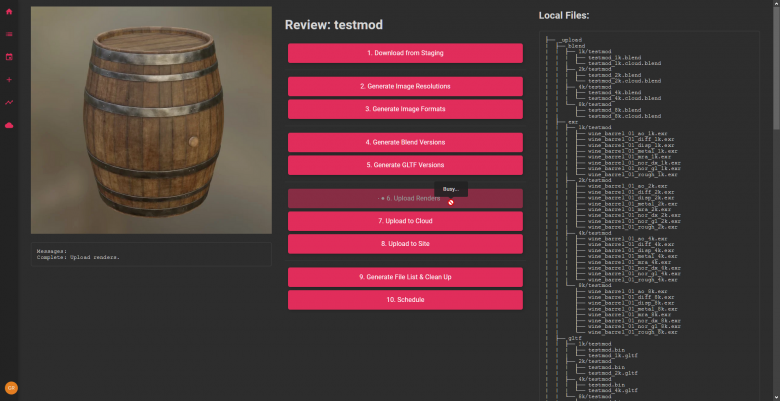

What I spent the majority of my time working on this month was a private interface to facilitate us uploading and processing our assets.

I initially thought this would only take a day or two, since I’d done similar tools in the past for the existing platforms.

But as with a lot of systems I’ve worked on lately, things seem to become a lot more complex than I expected due to the nature of trying to create these things in Javascript dynamically, rather than using offline scripts like we did previously.

The challenge was handling all three asset types, and the edge cases of each of them (such as inconsistent file naming, unusual texture maps, or complex material setups), and doing this in a transparent way so we can potentially see issues as they pop up before moving on to the next step that might break things.

Functionally this is now complete, except for the last step of scheduling a publication date.

After that it’s just a matter of uploading and using this tool on each new asset (several dozen), as well as most of our hundreds of old assets that need reprocessing in some way to conform to the new standards.

More Texture Scans

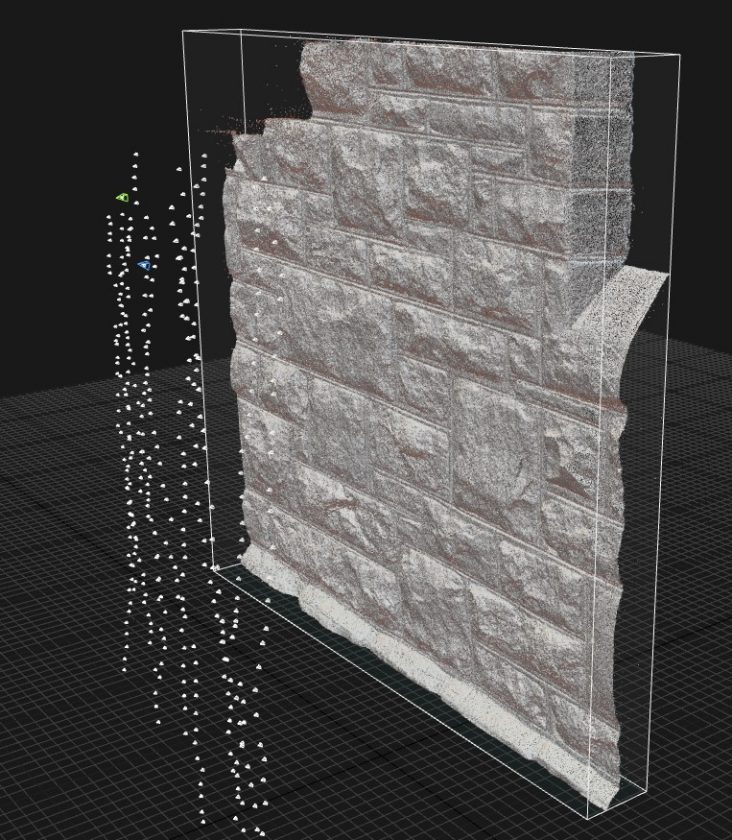

Rico started off this month by processing a few more of Meat’s texture scans. As mentioned in a previous blog post, having 16k texture outputs means having much higher polygon density per scan. The raw geometry for a single scan can now easily go above 300 million triangles. A typical scan project takes up 50-80 Gb of hard drive space.

James and Rico also spent time fixing and retouching some of the older texture scans. Our workflow for scanned surfaces continues to evolve, but we’re also always trying to improve the existing ones in our library.

Rob went on a texture scanning trip recently, he wrote a post on his own blog about this explaining some interesting methods to deal with delighting, and the consequences and opportunities of taking a trip like this during the current pandemic.

Prep Work & Organization

In preparation for the launch, we’ve been doing a lot of work getting all the textures and models ready for the new site. We have to make sure every file is following the correct naming and formatting conventions, bit depth, resolution and so on. Rico has also been working on a new lighting setup and thumbnail renders for the models.

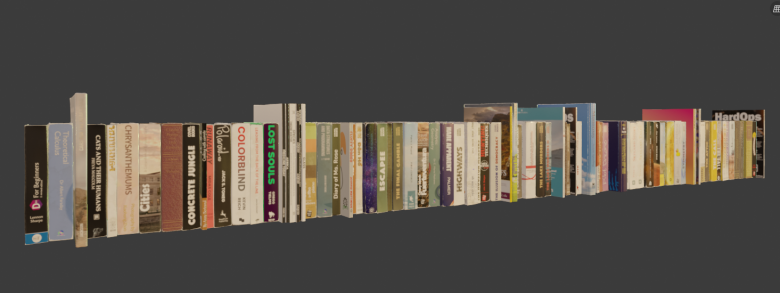

Books!

Books!

Last year we organized a book design contest, which was super successful.

It’s taken us way too long to get these published as 3D models, but we’re finally doing it, and I think it was worth the wait 🙂

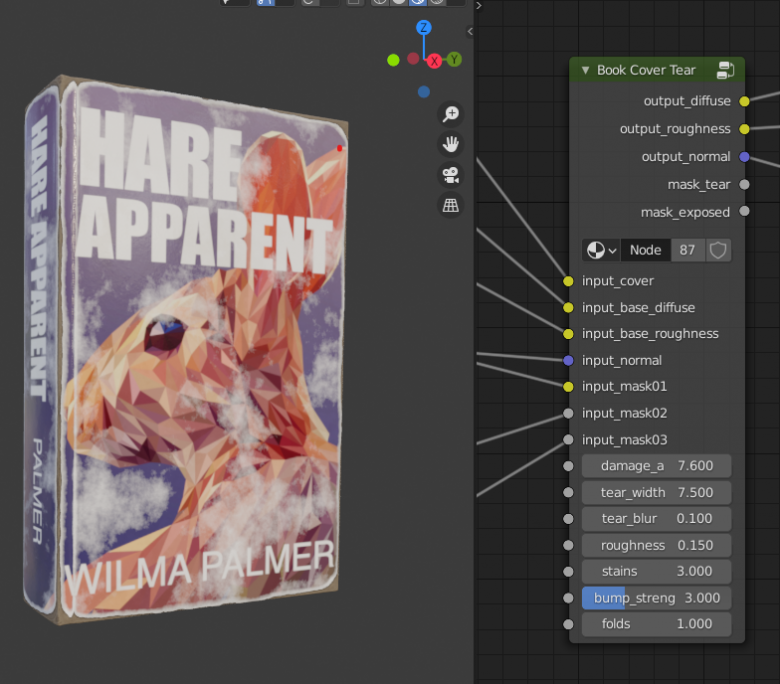

James created an amazing semi-procedural and customizable shader for all these books, in different styles (hard cover, soft cover, and magazines), with controls for age, damage and tear effects.

These are all randomized by default, producing a good variety of visual interest with very little effort.

Thanks to all of those who had submitted them a while back. They were great to work with and have got some great Easter eggs to find when reading.

-James

Just a short note to express my gratitude to you and all involved for this project that will benefit millions. A short delay is a trifle compared to the project at large. Thanks again. Alan

One thing I have learned in the software business is that *everything* takes longer than you think.

This is going to be such a great asset for the 3D art community! (no pun intended).

I’m excited. Thanks for your efforts.

Thank you so much for starting this site! Your models are really good quality (IMO better than the majority of pay sites!).